Here’s the exact post that got the Proton CEO in trouble:

Maybe Gail Slater really is a great pick for Assistant Attorney General for the Antitrust Division. Frankly, I have no idea. But I won’t do business with any company that carries any water whatsoever for Trump.

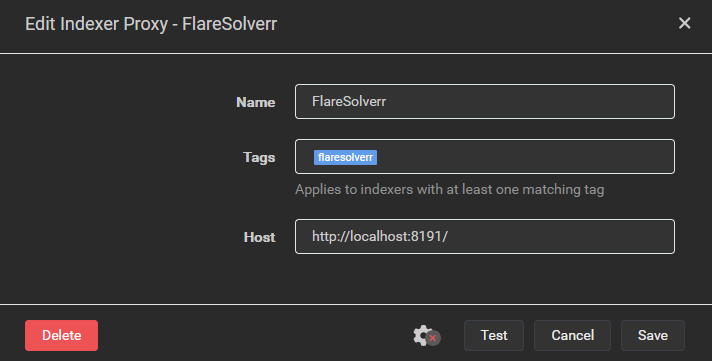

I switched to AirVPN about 6 months ago and I’ve been really happy with the service. Was previously using NordVPN, which was fine, but I was looking for a VPN provider that offered port forwarding and AirVPN does that. I don’t have hard stats on this, but I do feel that having access to port forwarding has improved my overall torrent speeds since switching.