- 95 Posts

- 306 Comments

9·2 年前

9·2 年前Cool. So nice to hear. Congratulations.

145·2 年前

145·2 年前“… and then he told me I should be a stay at home wife, and I accepted, we had 5 kids after we initially agreed to only have one but he pressured me, we moved to his hometown where I knew no one, he controlled all of my finances, after 12 years of marriage I confronted him for cheating on me but he always denied it, until I found his second family but I couldn’t leave him because I had no money of my own and lived far away from my family, then he became physically abusive and telling me that I was nothing without him, I needed help to leave him but im still fighting in court over child support and shared custody, but he is now with his third family and refuses to pay for nothing, I should have left him a long while ago but I couldn’t see the red flags and even my family thought at the time it was a bad idea to marry him but they knew I wouldn’t listen because I was 20 and naïve”

I’ve seen so many cases with different variations.

3·2 年前

3·2 年前“After this, im retiring”

2 years later: Hayao Miyazaki is making a new movie.

I look at the sky through my window. I never understood the need for weather apps.

Transitioning.

1·2 年前

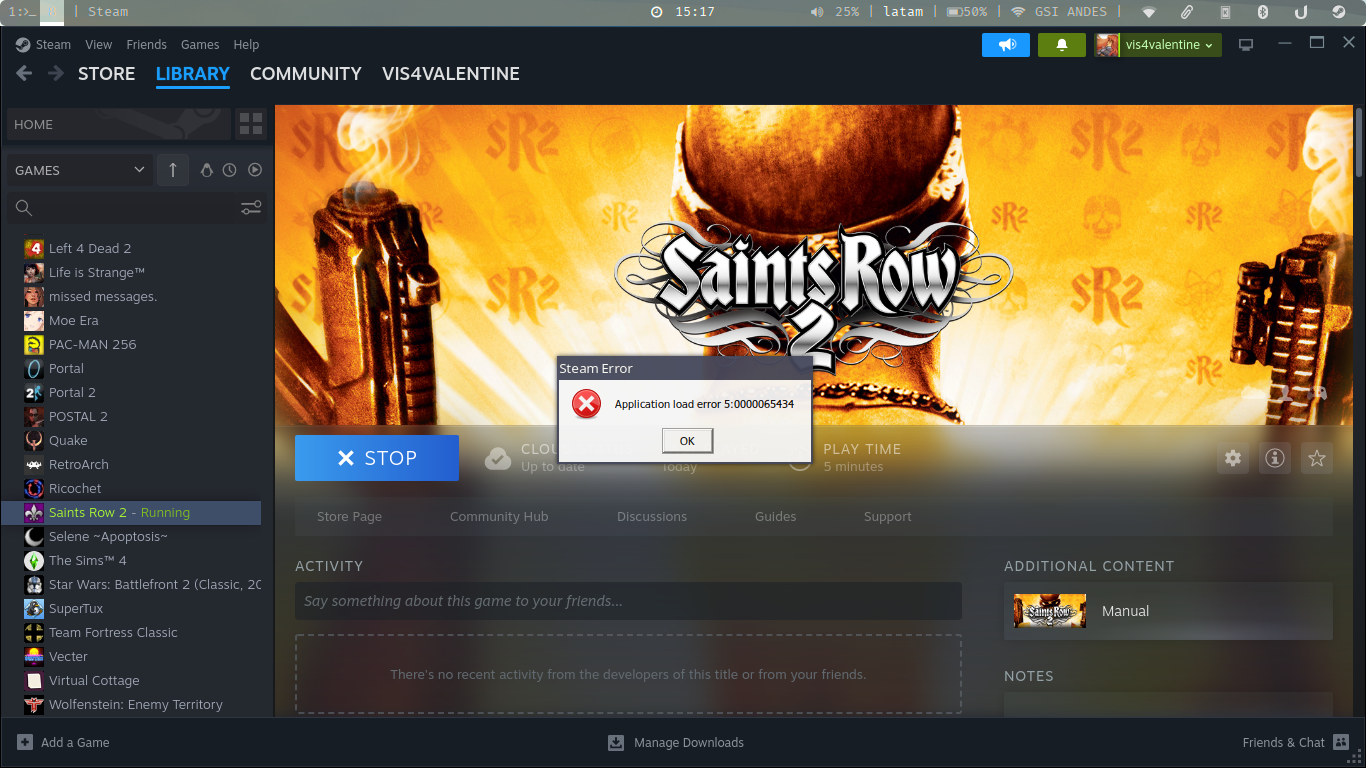

1·2 年前Hi, I install the libva.so from AUR and also I did this:

steam-runtime --resetAnd seems to work, but now I have a different problem, this one:

I now need to find out how to fix this one.

1·2 年前

1·2 年前I would like to know what library that might be.

2·2 年前

2·2 年前I see only a saintsrow2.i386 file in the game folder.

753·2 年前

753·2 年前Classic fascist narrative: The enemy is both strong and dangerous, and weak and dumb.

2947·2 年前

2947·2 年前Yes, that is true until you are 2+ years on estrogen and testosterone blockers, then your advantages go away.

141·2 年前

141·2 年前I think someone who is forced to do hard labor since birth of course is gonna be stronger than a master who can’t wipe his own ass without 15 servants helping him, so they gotta think blacks are naturally stronger.

201·2 年前

201·2 年前“A N***** WANTS TO BE PRESIDENT. AMERICA HAS LOST ITS WAYS TO INSANITY”

“F*****S PARADE AROUND THE CITY AND THEY WERENT SHOT AT FIRST SIGHT”

“PATRIOT ARRESTED FOR BURNING CROSSES”

“PEOPLE CLAIMING STATE AND CHURCH SHOULD BE SEPARATED ARE NOT FIT FOR OFFICE, THEY ARE COMMUNIST TRAITORS”

71·2 年前

71·2 年前Button shirts.

The day on fully out as trans ill never touch a button shirt again. I wanna have all black blouses.

9·2 年前

9·2 年前Well, I have that, the thing is, I clean my dick (while flaccid) multiple times a day.

I dont care because im a bottom so is not a problem for me.

I dont know who made it.

You can try and track the artist.

41·2 年前

41·2 年前I know an old man who is a trump supporter, conspiracy theorists, flat earther, reptilian Illuminati believer.

Even he agrees that Miley is a nutjob unfit for being president.

Thank you!